reespec — A Framework for Human-AI Collaboration

Case Study

reespec — A Framework for Human-AI Collaboration

Pelerin Tech

February 2026

As AI-assisted development became part of our daily workflow, we noticed a recurring problem: the gap between what a human intends and what an agent delivers. Vague briefs produce vague results. Without structure, decisions get lost, work gets repeated, and quality is inconsistent.

We needed a methodology — not just a prompt template, but a full collaboration framework that could work across projects and team members.

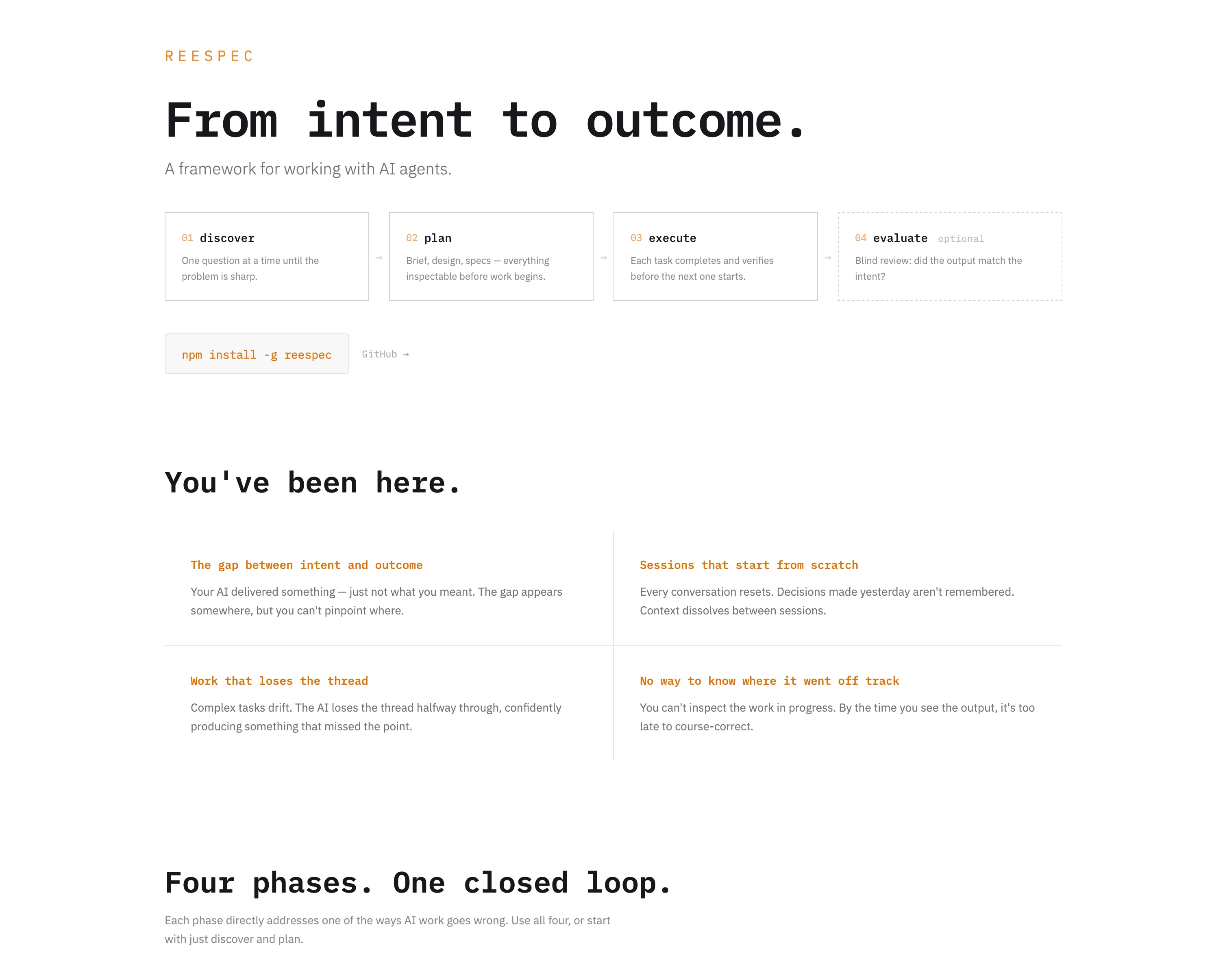

reespec is a four-phase framework and CLI tool that structures human-agent collaboration through a repeatable cycle:

The tool is available as a CLI on npm and open-sourced on GitHub. It initialises in any project with a single command, and the artifact format is plain markdown — no lock-in, no proprietary formats.

We use reespec on our own projects and on client engagements. The pelerin.tech website itself was built using reespec to plan and execute every feature.

reespec demonstrates our depth in AI consulting and developer tooling. We don't just use AI tools — we think carefully about how humans and agents should collaborate, and we build the infrastructure to make that collaboration reliable and traceable. If your team is adopting AI-assisted development and needs structure, process, or custom tooling, this is the kind of work we do.

Work